9/3/2020

Artificial intelligence, more commonly called AI, has long been the subject of sci-fi movies, speculation and fear. How will it help the field of ultrasound? What might the unintended consequences be? So many questions with anything automated.

First let us look at what it is before we can talk about it as it relates to ultrasound. The general definition of AI is the computations that results in machine learning where machines will perform tasks that were previously executed by logical human reasoning. This involves many algorithms and really good programmers. There are a couple of basic categories of AI. Narrow or weak AI is one where a computer performs a single task. An example of this is a Google search. You put in simple words and a list of related items pops up for you to click on. Strong AI or Artificial General Intelligence is the use of logic and decision making by machines. An example of this would be robots that perform surgery with human guidance, like the Da Vinci system. There are other subcategories of each one of these two areas.

The first sub type is called reactive machines. The computer or machine does programmable tasks but they have to do it over and over again as told to. It does not remember to do the same task over and over again. Past tasks have no bearing on current or future decisions. The next type is limited memory or class II machines. There is memory based on repetitive experience to do certain tasks again and again. An example of this is the area on the patient demographic screen that is listed as referring doctor. If you type the same name a few times it remembers and holds it in the drop down list. However it cannot know ahead of time which doctor will be needed for which patient to enter it for you. For those of you who use worklists that is a different task. The third type is called Theory of Mind. This is the iRobot stuff (classIII). Devices have to be able to form representations of words and shades of meaning for verbal commands. The instruments must have a significant memory in order to do their tasks and exhibit behavior based on their own decisions. These devices are doing and behaving in a certain way. The last type is the self awareness which is an extension of the theory of mind. If you remember Rosie from the Jetsons cartoon: this is a good example of this category. We are far from this level if sophistication. If we want these types of machines to evolve we humans need to have a more highly evolved sense of self awareness as well. This involves deep machine learning.

There are several ways that the medical community is adapting AI to help in the delivery of health care. The first part of our process is patient registration, then the doctor examines the patient, next an order for imaging is placed, then the ultrasound is performed. Lastly the doctor interprets the images and issues a final report to the referring physician. From a sonographer’s perspective, we need a better way to collect histories from patients to insure accuracy. In many institutions sonographers are barred from looking at the whole patient’s chart to gain the best information for their decision making. The other scenario is a language barrier or due to HIPAA the sonographer works in one business that is entirely separate from the physician who saw the patient. We all know that patient’s memories can be spotty at best. The best example is the order for a gallbladder ultrasound when the patient already had it removed. If AI were implemented then this order would never have been generated. In this example AI would have been useful in the registration process and when the doctor examines the patient.

Now let us look at how AI will affect the actual performance of how we do ultrasound exams. In the age of the B scan with an articulated arm, we had to set the liver curve to one who had a normal liver at the beginning of each day. Then we used two foot pedals and many adjustments to get lousy images. Fast forward several decades later and we have machines that channel us based on the selection of presets. In a previous blog about presets this one step will decide many things going forward in the future. The idea is to speed up the ultrasound exam and make it less user dependent. Are there areas where we need assistance-sure? The most pressing thing is a way to deal with obesity. We could add technology to make PACs seamless. There could be room for improvement on imaging sensitivity. Bear in mind here at Ultra Select Medical we use many manufacturers so the statements here are based on a wide array of usage.

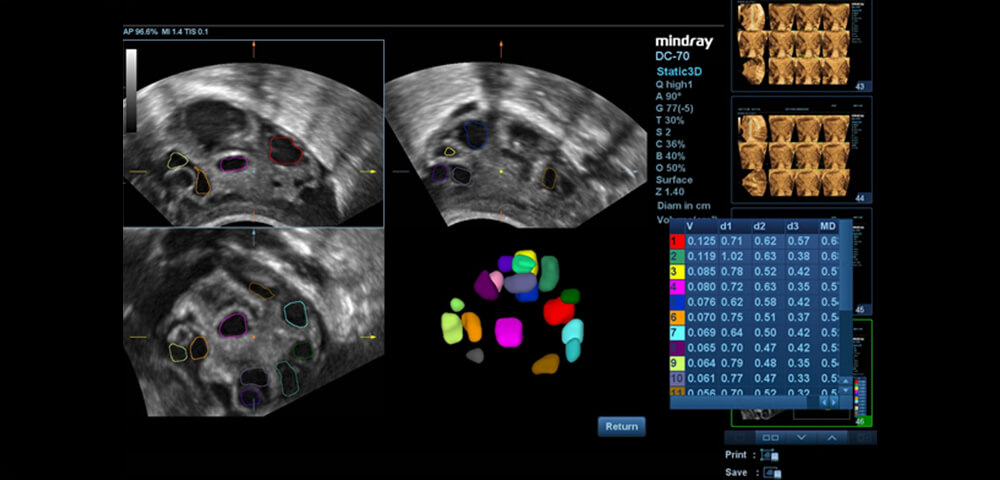

Some examples of how the algorithms help us do our job are the special (option) functions of the machines. There are the auto functions like auto EF, AFI and TQI in cardiac imaging. For gyn there are an array of manufacturers that offer auto follicle measurement. In the ob arena there is a variety of smart or auto 3D based functions that speed up measurements. Then there are the 3D/4D advancements as a whole and all of those applications across all specialties.

These generally add time on to an exam there will come a time when insurance reimbursement will allow for the technology to be fully used and then exams will speed up. The reimbursement has a hold over what can and cannot be used to pay for exams. Here is a thought- maybe they should hire sonographers to guide them in their policies regarding the use of technology.

Then there are the algorithms to help the doctors make more accurate diagnoses when interpreting the images. Samsung Medison software solution uses the S-Detect for breast which was approved in 2016. However it is only FDA approved for shape and orientation. This software shows detection and classification according to BIRADS classification of breast disease. This is just one example of how AI will be used to help the accuracy of diagnosis.

We communicate with sonographers and doctors from all over the world about their needs and wants in ultrasound. However the manufacturers of these advancements must be careful not to tread on the hard won professional identities of the sonographers. We do not want to be dumbed down by having a machine think for us. These may be the unintended consequences. Our profession is unique in our education and knowledge base and we want to keep it that way. One example of what I am speaking of is a company that is developing ultrasound for in home use. If anyone can do it then how will our professional standing be affected? Just something to consider.

What things do we see coming down the road? No we do not see robots doing our job. There will be remote ultrasound like what we saw being done in space with a qualified sonographer or doctor guiding the process from afar. We predict that the exams will lessen in terms of time and how structures are measured. There will be improvements in the pre and post ultrasound exam process. The use of 3D and 4D will explode as soon as the insurance companies adjust their policies. There are other uses that I cannot speak to as Ultra Select Medical will be evaluating and developing them as proprietary devices. So that will keep you in suspense.

References

- Preparing For The Future Of Artificial Intelligence, White House document 10/2016, National Science and Technology Council.

- Artificial Intelligence within Ultrasound, By Stuart Kusta for Signify Research 12/11/2018.

- How AI Is Changing Ultrasounds by Roland Rott for Diagnostic Imaging, 11/27/2018